You submitted your application. You completed the test. And now you’re wondering – did a machine just decide your career? Welcome to 2026, where the first recruiter you face isn’t human.

The Algorithm That Reads You First

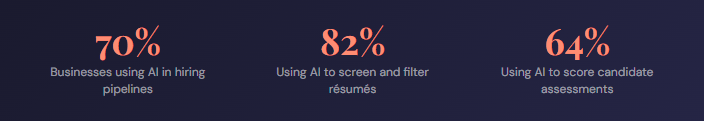

By 2026, around 70% of businesses use AI in their hiring process, with 82% relying on it to sift through résumés and 64% using it to review candidate assessments. For freshers, this means your first interaction with a company is almost certainly with an AI – not a human.

This raises a question that every fresher, career-switcher, and job seeker should be asking: how reliable is AI assessment accuracy, really? Is it a fair judge of your potential, or just a sophisticated filter that sorts candidates by pattern-matching? This blog breaks open the black box.

What Is AI Assessment Accuracy, Really?

AI assessment accuracy refers to how closely an algorithm’s evaluation of a candidate’s skills, behaviour, and potential matches that person’s actual real-world ability. It is not a single number. It is a composite of how well the system’s data, models, and calibration methods align with the truth of what a candidate can do.

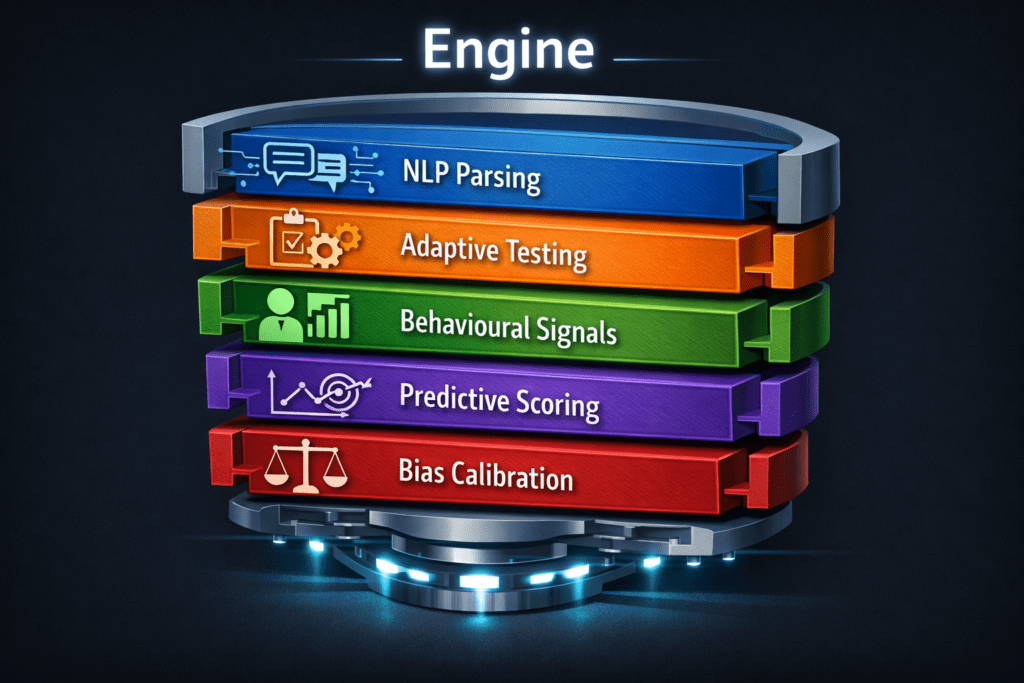

Most people treat AI-evaluated tests like a regular exam — finish it and forget it. But the engine underneath is far more complex. The score you receive is the output of multiple processing layers, each with its own assumptions, strengths, and blind spots.

The 5-Layer Engine Behind Every Score

Understanding AI assessment accuracy means understanding what happens the moment you hit “Submit.” Here is how modern AI assessment systems actually process your responses.

- Natural Language Processing (NLP) for Resume Parsing

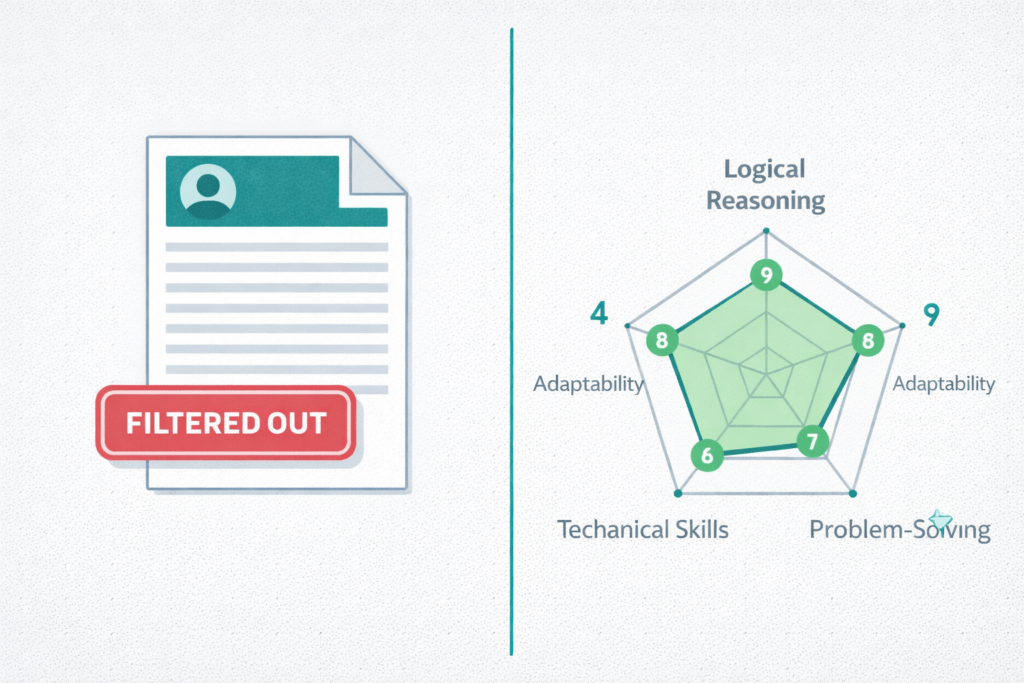

Before you even take a test, your résumé is parsed by an NLP engine. It extracts entities — job titles, skills, institutions, durations — and maps them to a structured candidate profile. The accuracy of this first pass directly determines whether your résumé clears the threshold. A poorly formatted résumé or unconventional section heading can confuse the parser and sink a strong candidate. - Adaptive Question Delivery

Most enterprise AI assessment platforms use Computerised Adaptive Testing (CAT). The system adjusts question difficulty in real time based on your previous answers. Get a question right, and the next one gets harder. Get it wrong, and the difficulty drops. This approach improves AI assessment accuracy dramatically because it homes in on your true competency level rather than assuming everyone is the same. - Behavioural Signal Capture

Here is what most candidates do not know: the system is not just scoring your answers. It is logging your response latency (how long you paused before answering), your answer revision patterns, your scrolling behaviour, and in some platforms, even keystroke dynamics. These behavioural signals are fed into the scoring model as additional evidence of cognitive style, confidence, and stress response. - Predictive Scoring Models

Once your responses and behavioural data are captured, a machine learning model — typically trained on historical hiring and performance data — generates a predictive score. This is where AI assessment accuracy gets tricky. If the training data reflects past hires from specific demographics or universities, the model may encode those patterns as proxies for “good candidates,” which can disadvantage freshers from non-traditional backgrounds. - Bias Calibration Loops

Responsible AI assessment platforms include fairness testing and bias audits as part of their pipeline. These loops compare score distributions across demographic groups and recalibrate the model if disparities are detected. However, the quality of this calibration varies enormously between vendors — making platform choice a key determinant of true AI assessment accuracy.

Where AI Assessment Accuracy Breaks Down

No system is perfect, and AI assessment accuracy has well-documented failure points that every candidate and employer should understand.

“The score you see is only as accurate as the data the model was trained on. Garbage in, garbage out — even when the garbage is dressed up in sophisticated algorithms.”

Training data bias is the most significant challenge. If historical hires were skewed toward certain profiles, the model learns to favour those profiles – not because they are objectively better, but because they were previously chosen. AI assessment accuracy also suffers from context collapse: a test designed for a mid-level role in one industry may be misapplied to entry-level candidates in another, producing scores that do not reflect actual fit.

There is also the problem of gaming detection overreach. Systems that flag response patterns as “suspicious” (e.g., unusually fast answers) may penalise confident, high-performing candidates who simply know the material well.

How to Prepare When an Algorithm Is Judging You

Knowing how AI assessment accuracy works gives you a genuine strategic advantage. Here is what that looks like in practice.

Format your résumé for NLP parsers. Use standard section headings (Experience, Skills, Education), avoid tables or multi-column layouts, and use keywords from the actual job description. The AI is looking for matches, not creativity.

Practice adaptive testing environments. If you always study at a comfortable pace with reference materials open, you will struggle under the timed, escalating pressure of a CAT system. Practise under conditions that mirror real AI-evaluated assessments — ideally on a platform specifically designed to simulate them.

Be consistent, not performative. Because the system logs behavioural signals, trying to “act” a certain way will introduce inconsistencies that sophisticated models can detect. Genuine competence, practised under pressure, is a more reliable strategy than rehearsed performance.

Practice Under Real AI Assessment Conditions

Newtum’s AI Assessment Tool is built to simulate the exact scoring environments used by top employers in 2026. Get your skill baseline, identify your gaps, and walk into every AI-evaluated hiring round fully prepared.

The Future of AI Assessment Accuracy

The trajectory of AI assessment accuracy is upward, but not uniformly. Advances in multimodal AI – systems that can evaluate voice, video, and text together – are expanding the data available for scoring. Explainability frameworks are becoming a regulatory expectation in markets like the EU, meaning platforms will increasingly be required to tell candidates why they scored the way they did.

For employers, the shift toward continuous, skills-based evaluation rather than one-time hiring filters is redefining what AI assessment accuracy is even for. The question is no longer just “can this person do the job today?” but “how quickly will they grow into what we need tomorrow?”

For freshers, this is significant. A strong performance on an AI-evaluated assessment is becoming more valuable than a prestigious degree, particularly in technology, data, and product roles where skills are demonstrable and measurable.

Conclusion – The Algorithm Is Already Watching

AI assessment accuracy is real, meaningful, and consequential – but it is not infallible. Behind every score is a stack of engineering decisions, training data choices, and calibration trade-offs made by the platform vendor. Understanding those decisions transforms you from a passive test-taker into an informed candidate who can strategically prepare for what the algorithm is actually measuring.

The good news? When you understand how the engine works, you can learn to perform authentically within it. And that is precisely what next-generation tools – like the AI Assessment Tool launching soon on Newtum – are designed to help you do: practice in conditions that mirror reality, so that when the algorithm meets you, it meets you at your best.